Critical Evaluation: Hallucinations, Bias, and Source Reliability

Critical Evaluation: Hallucinations, Bias, and Source Reliability

Learn to spot fabricated citations, detect hallucinated facts, evaluate bias, and triangulate claims with a practical checklist for “trust but verify.” Includes a verification lab and an end-of-lesson problem-solving task.

Mic: Off

Tip: For best voice options, use Chrome/Edge. If voices don’t appear yet, click once on the page and wait 2–3 seconds.

1) Outcomes

By the end, you can…

- Identify hallucinations (confident statements without support).

- Spot fabricated citations and suspicious bibliographic patterns.

- Assess bias (framing, omitted alternatives, loaded language).

- Triangulate claims using multiple independent sources.

- Use a practical trust-but-verify checklist in research/teaching.

Safety rules

- Never cite a source you haven’t verified exists.

- For high-stakes claims, require: source + method + data + replication (if possible).

- Prefer primary sources (original studies, official reports) over summaries.

- If evidence is unclear, label it: Unverified / Needs confirmation.

Trust but verify = treat AI output as a draft hypothesis. Verify claims with reliable sources before using them in teaching or publishing.

2) Conversation (Reliability Coach)

Practice “trust but verify” through short steps: identify claims → request evidence → check source quality → look for bias → triangulate.

Tip: A good verifier question is: “What evidence would change your conclusion?”

3) Reading + Comprehension Quiz

Reading: How to evaluate AI output critically

1 AI systems can produce fluent answers that sound authoritative even when details are wrong. This is often called a hallucination:

a statement that is not supported by evidence or that misrepresents a source. Hallucinations are more likely when questions are vague,

when the topic is niche, or when the model is pushed to provide citations without access to real databases.

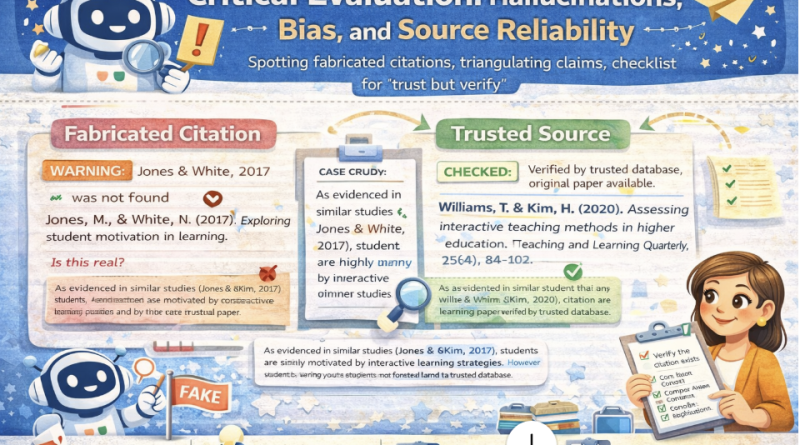

2 A major risk in academic settings is fabricated citations. Warning signs include references that cannot be found in Google Scholar,

inconsistent author names, impossible journal/volume combinations, or suspiciously perfect formatting with missing key metadata.

A safe rule is: if you cannot locate the source, you cannot cite it.

3 Bias is not only about offensive content. In research, bias often appears as selective evidence, one-sided framing,

or ignoring reasonable alternative explanations. A strong critical reader asks: “What assumptions are built into this answer?”

and “What counter-evidence might exist?”

4 Triangulation improves reliability. Instead of trusting one source, verify a claim using multiple independent sources.

Prefer primary research and official data. If sources disagree, report uncertainty and investigate reasons (methods, populations, contexts).

For teaching and publishing, treat unverified AI output as a hypothesis, not as a fact.

5 A practical checklist is: identify the claim; ask for the source; check whether the source exists; evaluate methodology and relevance;

and cross-check with other sources. This process turns AI into a useful assistant while protecting academic integrity.

Comprehension check (choose the best answer)

4) Reliability Toolkit (Hallucinations, bias, triangulation)

Hallucination red flags

Fabricated citation red flags

Bias checks (academic)

Triangulation workflow

Trust-but-verify checklist (printable)

5) Prompts + Examples (Copy & Adapt)

These prompts force AI to show uncertainty, cite verifiable sources, and propose triangulation steps.

Prompt 1 — Claim → evidence → uncertainty

Prompt 2 — Citation verification plan

Prompt 3 — Bias audit

Prompt 4 — Triangulation plan

Mini example (expected output style)

6) Listening (Two Google Voices) — “A claim is not a fact yet”

Listen to two instructors discussing hallucinations, bias, and triangulation.

7) Trust-but-Verify Lab (Paste AI output → flag risks)

Paste an AI-generated answer (or draft). The lab will flag:

(a) strong claims without evidence, (b) citation-like strings, (c) loaded language, and

(d) generate a triangulation plan. This is heuristic (teacher judgement still required).

A) AI output to evaluate

Tip: Include any citations the AI provided.

B) Your context (optional)

Tip: High-stakes = stronger verification requirement.

Risk report

Safe AI prompt (to request verifiable evidence)

8) Problem-solving

Scenario: A colleague pasted an AI paragraph into a paper. It includes 3 citations and a confident claim:

“AI chatbots improve speaking proficiency by 35% on average across studies.”

You suspect hallucination or cherry-picking.

Your task:

Your task:

- Write a verification plan (5–8 bullets).

- List 3 red flags you would check for fabricated citations.

- Draft a triangulation strategy (at least 3 independent sources/types).

- Rewrite the claim into a cautious, evidence-aligned statement suitable for a draft.

A) Verification plan (5–8 bullets)

B) Fabricated citation red flags (3 bullets)

C) Triangulation strategy (3 bullets)

D) Cautious rewrite of the claim

Progress

0

Activities completed

0%

Average score

0

Checklist items used

Saved locally in your browser (local storage).